Some movies ,like Ghost in shell 2017,4K HDR is too dark.Does coreelec support HDR to SDR automatic ly? How to setting?

Yes. Auto is the default setting. You can find it in CE settings.

I don’t recommend to watch HDR movie on SDR screen.

As @Pelican said HDR2SDR is on by default, but if your box has a S922X CPU (e. g. Odroid-N2 or others) , the picture will be to dark because of problems with the tonemapping in this chip. And there are also small picture artifacts on N2 with HDR2SDR enabled (depends of video).

My TV is Samsung Q60, which supports HDR, but the maximum brightness is only 400nits. Playing HDR 4k movies is too dark. I also tried potplayer + LAV + madvr on my PC. The mdvr renderer can control the HDR mode.

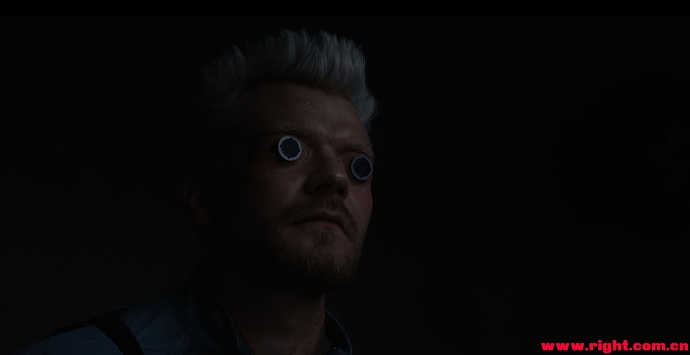

The following three pictures are set to hdr throught, hdr 100nits, hdr 400nits effect in madvr。

Can coreelec provide optimization for HDR display like potplayer?

Your tv supports HDR so you don’t need HDR2SDR at all.

The first picture looks as HDR video played in SDR.

Yes. The TV supports hdr,but it only supports hdr format. The actual effect of playing hdr movies is very poor, it is better to turn off hdr.

The first picture is indeed the hdr movie played on the sdr device, the color is worse, but the details are clearer.

I want to know whether coreelec can be forced to close hdr。

Any counter actions that one can do to fix the s922x tonemapping issues ? I use GT-King Pro and it seems to have this issue, I output to 4K HDR projector, and I’m forced to lower HDR effect severely via projector menu, thought it was so sort of content problem… you opened my eyes?

You can have HDR to SDR or HDR passthrough in CoreELEC.

I think that HDR can be handled as follows:

- You can choose to force a passthrough HDR, or turn off HDR to output in SDR mode. Even if the TV supports HDR,You can also choose to output SDR.

- If you select HDR output, you can manually set the brightness reference value of the device. If the maximum brightness of the device is only 400nits, you can output with hdr400 or lower to get better brightness. If the device supports 1000nits, you can use hdr1000 output.

And I don’t know how coreelec can set the forced SDR output (even if my TV supports HDR), so that I can see the effect of picture I. The color is lighter but the details are clear.

Hmm - there seems to be a misunderstanding about how HDR10 (aka PQ - Perceptive Quantization) works here.

Ignoring the HDR-on-SDR displays issue, if you have an HDR10 signal and an HDR10 display, the way PQ is supposed to work is that every pixel value in the source content should dictate an exact pixel light level on the display. If the display isn’t capable of outputting the brighter light levels in the source material, then it should clip or otherwise tone map to handle this. This is to allow the viewer at home to see as close to an identical picture to that of the mastering suite - with a 1:1 mapping between pixel value and light output. What you see at home should be what the director, DoP and colourist saw in the mastering suite.

Properly mastered HDR material should be keeping all the regular picture material in the SDR range, and only using the >SDR (i.e. pushing into HDR) for things like speculars (those glints on shiny metal), bright highlights (say car headlights, streetlights, neon signs etc. at night etc.) or highlight detail (avoiding clouds clipping etc.)

SDR is now standardised as 0-100nits (previously there was no nits standard for SDR). Anything above 100nits is defined as being in the HDR range. The bulk of an HDR-mastered movie will have its video content in the SDR range, i.e. be below 100nits, with the >100nits range reserved just for speculars, highlight detail etc. (This is the range that SDR mastering monitors are standardised to display.). In a grading/colourist suite you’ll see picture monitoring where the colourist can see what bits of the picture they are pushing out of the SDR range into HDR - and they try not to push regular picture content into this range usually. (Some early Amazon Prime stuff like The Man In The High Castle was graded really hard - and pushed regular content into HDR ranges…)

HOWEVER the reality is that most people don’t sit in low ambient light grading suites, and don’t set their TVs to display SDR content at 0-100nits, and instead run their HDR TVs much brighter than this with SDR content - so their SDR range on their TV could be 0-200nits, 0-300nits or higher. This means that their SDR picture is bright (and pushing regular content well into the HDR range technically reserved for highlights, speculars etc.). As a result, properly mastered, and correctly displayed HDR, will appear dark when compared with ‘improperly’ displayed SDR…

This is the fundamental flaw in HDR10/PQ - the standard dictates the light level proscriptively.

Watching an HDR10/PQ image on a TV that is correctly aligned and can cope with max brightness of 200 nits, 400nits or 1000nits, the main bulk of the image should appear identical on all three - as it should largely be in the SDR 0-100nits range. The only difference should be that the HDR highlight picture content above 200, 400, 1000nits respectively is clipped (and how that is done is a processing decision by the TV manufacturer). However as important picture information is in the 0-100nit range on correctly mastered content, the result should just be highlights/speculars appear different, not things like faces etc.

However - many people think the SDR 0-100nits range is often too dark (because they don’t like the SDR standard of max 100nits) - and to be honest they are right. It’s the fundamental flaw of the PQ/HDR10 (and Dolby Vision) flavour of HDR - where a precise mapping between video levels and light output is baked into the standard.

Some TV manufacturers allow you to basically make your TV non-HDR10/PQ compliant by stretching the 0-100nit SDR range to higher levels to make the standard picture brighter. Dolby Vision IQ adds this too.

The irony is that the HLG standard for HDR (used for live TV production and the standard the BBC and NHK came up with) works like regular SDR TV - and doesn’t dictate a precise light level to video level mapping, allowing you to set brightness, contrast etc. levels as you would for SDR and it has built in support for using ambient light level detection to optimise the picture display (making the picture easier to look at in bright viewing conditions)

Bottom line - if you have an HDR TV and don’t like how HDR10 is displayed on it, chances are you don’t like the HDR10 standard and how it dictates things…

The HDR2SDR tone mapping of AML is worse than average level.

Not CE team‘s fault, but AML SDK.

In addition to what noggin has said, what you want is not possible on CoreELEC.

You posted 4 pictures。Are the pictures of VIM+CE9.1 is played on HDR displayer or SDR displayer ? by HDR or HDR -> SDR? How about the pictures of Q5?And what’s the SOC chip of Q5?

In the pictures you posted, the two pictures of Q5 are brighter and more vivid. This is the HDR display effect that I want.

But in fact, when an HDR movie is shown on Samsung q60 TV, it looks that the brightness is too low. I don’t know if it’s the problem of Samsung TV or AML box output. It’s certainly not CE’s problem, because the effect played by Kodi or Mxplayer on Android is the same.